What is KSQL Kafka?

.

Likewise, people ask, is KSQL open source?

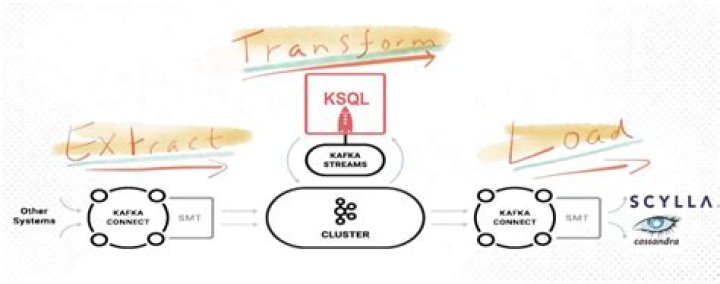

KSQL is the open-source, Apache 2.0 licensed streaming SQL engine on top of Apache Kafka, which aims to simplify all this and make stream processing available to everyone. Use cases such as Streaming ETL, Real-Time Stream Monitoring, or Anomaly Detection are discussed.

One may also ask, what is database streaming? Database streaming, or DB streaming, is a form of data streaming designed to deliver real-time insight that can improve business competitiveness. Kafka is a fast, scalable and durable publish-subscribe messaging system that can support data stream processing by simplifying data ingest.

Moreover, how do you use Confluent?

Confluent Platform Quick Start (Local)

- Step 1: Download and Start Confluent Platform. Go to the downloads page and choose Confluent Platform.

- Step 2: Create Kafka Topics.

- Step 3: Install a Kafka Connector and Generate Sample Data.

- Step 4: Create and Write to a Stream and Table using KSQL.

- Step 5: Monitor Consumer Lag.

- Step 6: Stop Confluent Platform.

Is confluent platform free?

The Confluent Platform is free and open source (see for the source).

Related Question AnswersWhat is the difference between Apache Kafka and confluent Kafka?

Apache Kafka includes Java client. If you use a different language, Confluent Platform may include a client you can use. Confluent adds HDFS, JDBC and Elastic Search connectors. REST Proxy - adds a REST API to Apache Kafka, so you can use it in any language or even from your browser.What is KSQL used for?

KSQL is the streaming SQL engine for Apache Kafka®. It provides an easy-to-use yet powerful interactive SQL interface for stream processing on Kafka, without the need to write code in a programming language such as Java or Python. KSQL is scalable, elastic, fault-tolerant, and real-time.Why is confluent?

Confluent is founded by the original creators of Apache Kafka®. Confluent Platform makes it easy to build real-time data pipelines and streaming applications by integrating data from multiple sources and locations into a single, central Event Streaming Platform for your company.How does confluent make money?

The startup makes money by selling professional services and commercial tools aimed at making the event processing platform easier to use. Confluent's flagship offering is a Kafka distribution called the Confluent Platform. The startup claims to have grown total subscription bookings by 350 percent in 2018.What is stream processing in big data?

Stream Processing is a Big data technology. It is used to query continuous data stream and detect conditions, quickly, within a small time period from the time of receiving the data.What is confluent platform?

The Confluent Platform is a streaming platform that enables you to organize and manage data from many different sources with one reliable, high performance system.Is confluent a public company?

Confluent has exceeded $100 million in annual bookings, Forbes reported. For Microsoft, the Confluent stake remains such a tiny drop in the bucket, even after multiplying by many times, that the company has never discussed it publicly.How do I push data to Kafka?

Quickstart- Step 1: Download the code. Download the 2.4.

- Step 2: Start the server.

- Step 3: Create a topic.

- Step 4: Send some messages.

- Step 5: Start a consumer.

- Step 6: Setting up a multi-broker cluster.

- Step 7: Use Kafka Connect to import/export data.

- Step 8: Use Kafka Streams to process data.

How do I connect to Kafka?

Approach- Install a Kafka server instance locally for evaluation purposes.

- Run the Kafka server and create a new topic.

- Configure the local Atom with the Kafka client libraries.

- Create an AtomSphere integration process to publish messages to the Kafka topic via Groovy custom scripting.

How does Kafka Connect work?

Kafka Connect works with Spark Streaming to enable you to do ingest and process a constant stream of data. Ewen used the example of streaming from a database as rows change. But you can also ingest logs, twitter streams, anything that's changing. You can aggregate, join different streams of data for your application.How do I start Kafka locally?

Make sure you run the commands mentioned below in each step in a separate Terminal/Shell window and keep it running.- Step 1: Download Kafka and extract it on the local machine. Download Kafka from this link.

- Step 2: Start the Kafka Server.

- Step 3: Create a Topic.

- Step 4: Send some messages.

- Step 5: Start a consumer.

How do I install confluent?

Install Kafka Confluent Open Source on Ubuntu- Update Packages. $ sudo apt-get update.

- Install Confluent Open Source Platform. $ sudo apt-get install confluent-platform-oss-2.11.

- Start Confluent. You may start all or some of the services using confluent command line interface with start command.

How do I run confluent Kafka on Windows?

How to run Confluent Platform on windows- Start ZooKeeper Server. From confluent-3.0.

- Start Kafka Server. kafka-server-start.

- Start Schema Registry: schema-registry-start. bat .

- Start producer. kafka-console-producer.

- Start Consumer. kafka-console-consumer.

- Then start pumping messages from producer and you should receive it on consumer.